Preserving Complex Object-Centric Graph Structures to Improve Machine Learning Tasks in Process Mining

Abstract

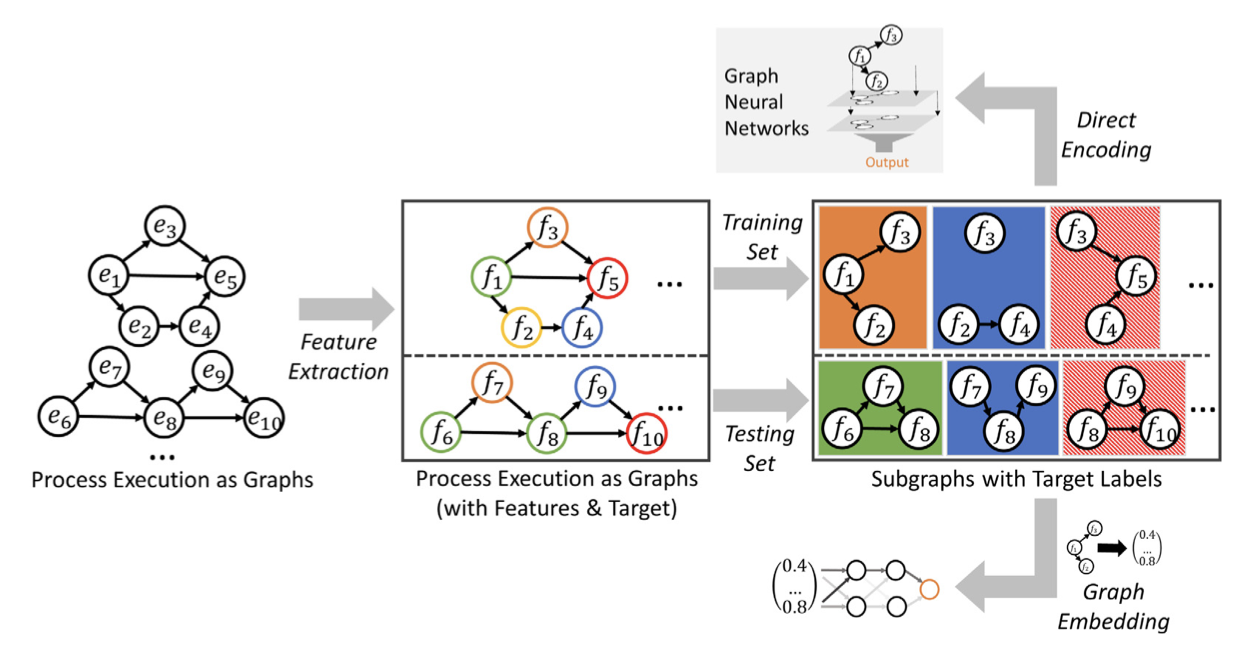

Interactions of multiple processes and different objects can be captured using object-centric event data. Object-centric event data represent process executions as event graphs of interacting objects. When applying machine learning techniques to object-centric event data, the event log has to be flattened into sequential process executions used as input for traditional process mining approaches. However, sequentializing the events by flattening removes the graph structure of object-centric event data and, therefore, constitutes an information loss. In this paper, we present a general approach to preserve the graph structures of object-centric event data across machine learning tasks in process mining. We provide two different techniques to preserve these structures depending on the required input format of machine learning techniques: as direct graph encodings or as graph embeddings. We evaluate our contributions by applying three different predictive process monitoring tasks to direct graph encodings, graph embeddings, and flattened event logs. Based on the relative performances, we assess the information contained in the graph structure of object-centric event logs that is, currently, lost in machine learning approaches for traditional process mining. Furthermore, we compare different graph embedding techniques for object-centric event logs to derive recommendations for future research and deployment. To conclude, we assess the improvement that graph embeddings constitute over state-of-the-art approaches that capture object information through specific features. Our contributions improve predictive process monitoring on object-centric event data and quantify the potential performance increases of predictive models. These contributions are especially relevant when looking at real-life applications of predictive process monitoring which are often applied to information systems with relational databases that contain multiple objects and, currently, still enforce flattening.